Literally no one talks about the PRICE you pay for the AI Slop

Last year, your team wrote 200 lines of code a day. This year, with AI, they wrote 1,200. Congratulations... you just entered the era of AI Slop.

Last year, your team shipped more slowly. This year, with AI, they ship 2x faster.

Now you should have all the reasons to smile, right? DAMN... you simply can’t.

Though your velocity is at its peak. PR count went off the roof. And demo day energy was immaculate.

But the scenario changes after three sprints, whether it is agile or lean.

Bugs multiply quietly

Senior engineers “just tweak” things for hours

Roadmaps look impressive, but retention is slipping

Decision makers stay happy, your codebase does not

And unknowingly, you just entered the era of AI Slop.

I’ve spent 17 years building SaaS products, and this is what happens when teams start relying heavily on generated output. The problem isn’t speed, it’s lack of control over what actually gets shipped. I broke this down here.

The Hidden Costs That Never Make It to the Dashboard

AI Slop starts quietly, right when everything seems to be working. It doesn’t arrive all at once; it creeps in through small shifts:

Speed replaces thinking

Output replaces ownership

“It works” replaces “it’s right.”

There is no warning sign. No dramatic entrance.

Debug debt grows because the code is compiled and the tests are passed. Nobody questions it. By the time someone traces the root cause to an AI-generated function from two sprints ago, the fix costs ten times what a single review would have taken.

False productivity keeps metrics healthy while the backlog absorbs the damage. A Workday survey of 1,600 companies found that nearly 40% of AI’s stated value is being lost to rework and misalignment.

Decision atrophy is the quietest cost. Engineers stop questioning outputs, and one day, they can’t make the call when it matters.

I keep seeing this pattern across teams. The slop does not announce itself. It hides inside green CI pipelines and closed Jira tickets.

What I have covered:

[1:24] The rework tax: 40% of AI’s value is lost because employees spend nearly 2 hours fixing every single instance of AI Slop.

[2:14] The real cost: For a 10,000-person company, unquestioned AI output costs over $9 million in lost productivity every year.

[3:06] The trust trap: Polished output gets passed on unreviewed, and that is exactly how one person’s slop becomes the whole team’s problem

Your Best Engineers Are Paying a Tax Nobody Files

AI-generated output often looks right. It compiles, passes tests, and reads confidently. Your best engineers aren’t building; they’re fixing shortcuts, untangling logic, and reviewing work they didn’t create.

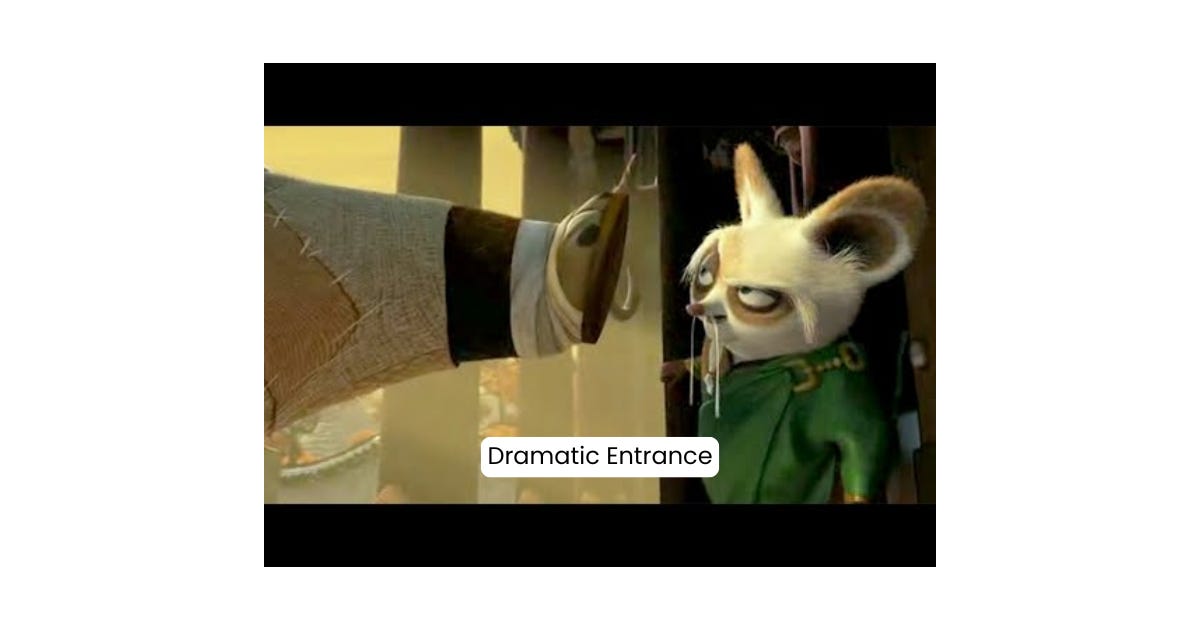

If AI saves five hours but creates eight hours of subtle maintenance later, you did not gain efficiency. You deferred clarity, and deferred clarity does not disappear.

You might also have Yes Man Engineers on your team who will embrace AI loudly, present the metrics proudly, and never raise a flag. They are your biggest risk.

I have written about this before, and I stand by every word. If output increases but outcomes do not, you are not scaling; you are decorating.

The GTM Risk Nobody Talks About in the Room

When engineering moves faster, GTM follows the noise. Marketing launches features before they mature, sales promises capabilities before edge cases are handled, and nothing damages trust faster than a feature that almost works.

Bad implementation does not stay technical. It leaks into sales calls and shows up in churn. Ritesh Osta, co-founder of Insightstap, has pointed to this clearly. The fix is not more tools. It is a cleaner execution.

Most teams add more wires. The best teams fix the flow. Want to go deeper into how teams are structuring this shift?

We are not in the "AI replaces engineers" era; we are in the "AI reveals weak engineering discipline" era. Discipline is the only thing that actually compounds here.

If you are building SaaS and AI products with real constraints and care more about judgment than hype, this newsletter is for you.

We messed up our Wednesday’s publishing streak. The irony?

We were experimenting with AI-assisted workflows to move faster. Turns out, AI can help draft content, but it cannot ship discipline.